Sep 3, 20 / Lib 23, 04 11:20 UTC

Which things would you like to have or see on Asgardia that they aren't there just yet?

Jun 12, 20 / Can 24, 04 23:30 UTC

A little utility that will allow you to inline code in MSVC, ICC, GCC and Clang https://github.com/samuelalonsorodriguez/Inline

Jun 7, 20 / Can 19, 04 00:19 UTC

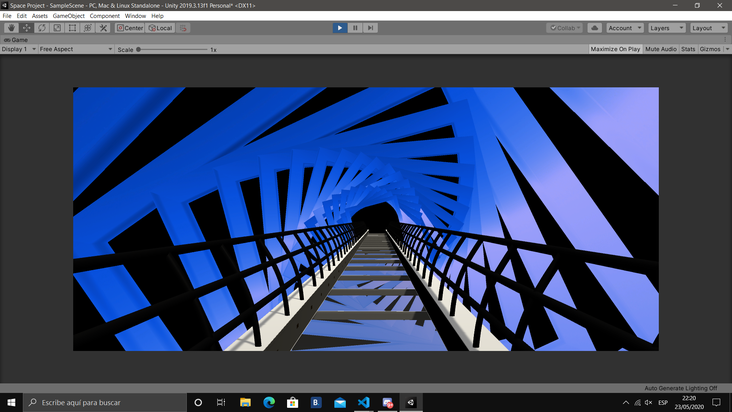

I can't see the light at the end of the tunnel, please help :(

Jun 7, 20 / Can 19, 04 00:42 UTC

At some point in their life, everyone feel like that. I feels like that a few weeks ago, but now I feel better. When we in a dark place, it hard to see other people who are also there around us. reach out and talk to someone, even to ones ...

More

May 28, 20 / Can 09, 04 05:17 UTC

Hey! Its me again, you are may be getting used to read me, anyways, another of my projects is to create an encryption suite, similar to the SSL standard. It provides 4 features: AIKE(Advanced Iris Key Encryption): It's actually used to encrypt the public key, in case you want to. ...

May 27, 20 / Can 08, 04 02:30 UTC

Hey everybody, good news for all of you that want to get involved into the programming world, i'm gonna offer a totally free C++ course from the very basics to the most advanced topics. Do you wanna get into? Just PM me! ;)

May 26, 20 / Can 07, 04 22:13 UTC

What do i want to achieve with all my work & projects? I want to show to all my beloved Asgardians that there's always hope! You can develop whatever skills are required to do the job. Certainly, there are physical limits, but usually it's a problem of mental barriers. So ...

May 26, 20 / Can 07, 04 12:07 UTC

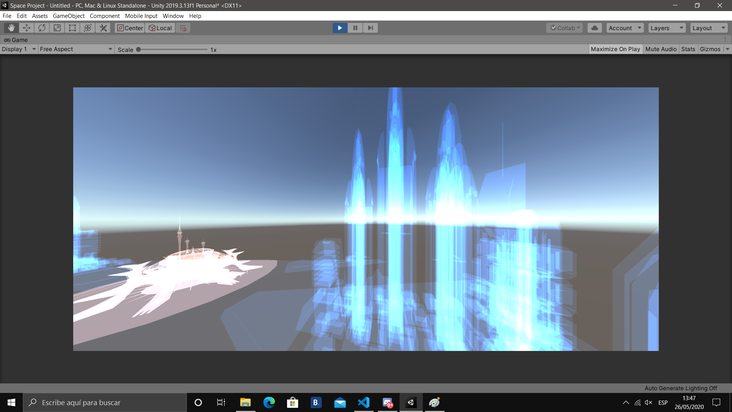

It's the holographic mode, obviously the Asgardia city will also be rendered with a solid mesh mode

May 24, 20 / Can 05, 04 21:53 UTC

May 25, 20 / Can 06, 04 20:21 UTC

Check what these england gentlemen did with Unreal4 engine... https://arxiv.org/abs/1907.04298

See all 4

comments

May 24, 20 / Can 05, 04 09:08 UTC

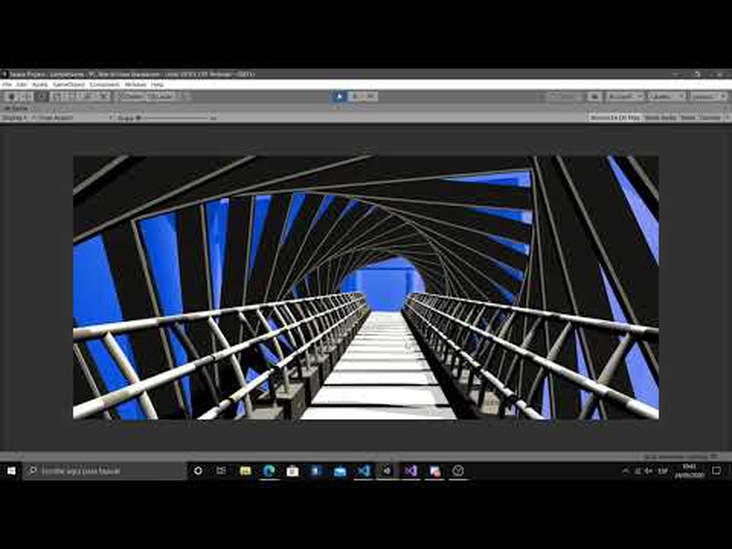

Worked so hard on this shader to get the right sine/cosine combo in an acceptable frame rate, hope you all like it! It changes between door portal and the 2-columns at both sides portal!

May 21, 20 / Can 02, 04 20:40 UTC

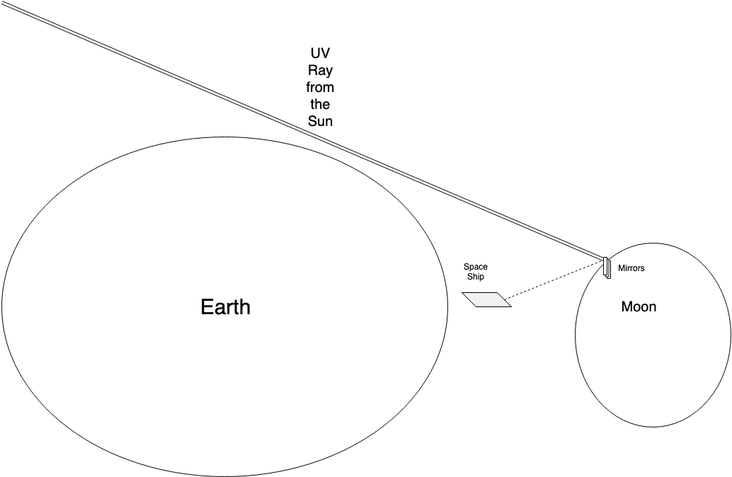

_______________________________________ UREL _______________________________________ ·Objective: -. Use Ultraviolet light(type-A) bounced from the Earth surface/Troposphere. ·Description: -. Installation at the bottom-side of the ship a series of PV Cells to convert the UV-A bounced from the Earth surface/Troposphere, due to CFC particles dividing Ozone into chlorine monoxide and oxygen, into electric voltage. ...

Nov 4, 19 / Sag 00, 03 00:22 UTC

Okay, the mirrored moon project were a bit ideally. Well, actually, a lot(that doesn't means that we won't take any action on the future if we can), so… lets talk about something that is happening right now, the Iris Project, a brand new programming language. Short showcase that only shows ...

May 15, 20 / Gem 24, 04 22:47 UTC

Hey Chris, the situation with language did changed a lot from then to nowadays. That past idea was indeed interesting, and i implemented a naive client/server solution, however, after several tests, the connections from the client to the server were at minimal(send the data and receive the response) at ~50 ...

More

May 15, 20 / Gem 24, 04 06:54 UTC

Interesting. I use Scala and Rust mainly now days, I'm interested in engineering machine learning solutions, often with the blockchain and dislike python and go. I see the theoretical attractions of the above, but need mature languages/libraries. How might this work for Asgardia?

Nov 4, 19 / Sag 00, 03 00:48 UTC

- No function return types: fn foo() { … } However, we have directions on the functions arguments, like: fn foo(in f32 inputVar, inout f32 referenceVar, out f32 returnVar) { … } - No crazyness on implementing move/copy semantics using l-value r-value references: We know that kind of semantics are ...

More

Oct 19, 19 / Oph 12, 03 04:07 UTC

Asgardia plans to add in a near future, spaceships over the Earth orbit. The design for these spaceships is pretty big, bigger than any satellite around us, right now. So, how could we feed that monstrosity at the dark side of the Earth if the Sun's light doesn't show up ...

Oct 30, 19 / Oph 23, 03 21:37 UTC

If you are going to the trouble of installing sun tracking mirrors en masse on the lunar surface, I would go the next step and do a https://en.wikipedia.org/wiki/Concentrated_solar_power to power operations on the surface and beamed into space simultaneously.. Also there isnt a system in place to give a 'gps' ...

More

Oct 30, 19 / Oph 23, 03 21:34 UTC

Agreed, a polar sun synchronous orbit at high altitude would be ideal, unless the point is to have this space ship or space station or whatever, be a jumping point to go to lunar surface?Mirrors would really bother alot of astronomers on earth shining like that, but solar panels or ...

More

See all 6

comments

Oct 18, 19 / Oph 11, 03 01:53 UTC

Hey! Here's Iris Technologies, and after a long time without any activity, we believe that Asgardia, as a formed nation(we believe it will happen eventually), need to produce its own top-quality software in terms of performance & functionality. So its time to move forward and develop our first C++ project(we ...

Oct 24, 19 / Oph 17, 03 17:26 UTC

I would love us to have a system branded for asgardia that prioritises development for all projects, set your programming skills and get a list of projects you can contribute to send a push to git get approved and be rewarded X in SOL for growing our technology.

Oct 19, 19 / Oph 12, 03 02:10 UTC

This eventually will, we plan to add support on the 5 mainstream OS(Android, Mac, Linux, iOS, Windows) as native and low-level(althought, we will use modern interfaces) as possible(We will use POSIX + OGLES + NDK + VULKAN + JNI & Java at Android, POSIX + Wayland + OGL + VULKAN ...

More

Apr 15, 19 / Tau 21, 03 04:03 UTC

That's probably the project of my life. And it is also the dream of many others. Today I'm glad to announce that Iris Technologies, are glad to announce the technology of the century. The Iris device which will transform science fiction, into reality. Something like Aincrad and Nerve gear are ...

Apr 15, 19 / Tau 21, 03 12:57 UTC

I believe we are some decades away from this type of virtual world. But it is interesting to think about. Today we have VR and AR, where i can now post my Resume or grocery list and view it in AR. I think the things to see coming out before ...

More

Add Friend

Request sent

Accept friend request

You are friends